“There are three kinds of lies: lies, damned lies, and statistics.”

Have you ever found yourself staring at an online poll, wanting to vote but something in the back of your mind kept telling you that you’re not 100% sure if your response will be confidential?

And vise versa – have you ever created a poll looking forward to analyze plenty of votes, but somehow you just knew that you’ll probably not gonna get the truth out of most respondents?

Apparently, you’re not alone.

When asking sensitive or personal questions, or even questions that respondents feel may portray them in a certain way (socially, financially, professionally, etc.), people tend to either lie or not participate at all. This phenomenon is called Response Bias and it includes multiple factors that affect the tendency for people to respond falsely or inaccurately to questions, for example:

- Pressure to conform to social standards

- Trying to please the questioner

- Fear of consequences

When asking sensitive or personal questions, people tend to either lie or not participate at all.

Existing Poll Systems

Whereas in-person or telephone surveys can make a person feel uncomfortable or be untruthful with their answers, online polls manage to provide a safer environment for respondents.

Or do they?

As it turns out, that nagging feeling of uncertainty in whether the votes are truly secure is actually founded: in a standard online poll, the creator of the survey (or an adversary, for that matter) typically has access to additional voter information that can compromise privacy.

In LinkedIn for example, the poll creator can actually see how each user voted:

“How you vote is visible to the author of the poll (i.e. the person or organization who posts the poll) … While LinkedIn only shares how you vote with the poll author, we cannot guarantee that a poll author will not disclose your vote to others.”

This of course is not unique to the LinkedIn poll system – Twitter shows users how many votes were made and percentage per answer. Although the survey creator doesn’t get to see how each specific user has voted, it still requires each participant to trust the data processor (which recent events make very difficult), as Twitter will still have information on the user’s IP and actual vote.

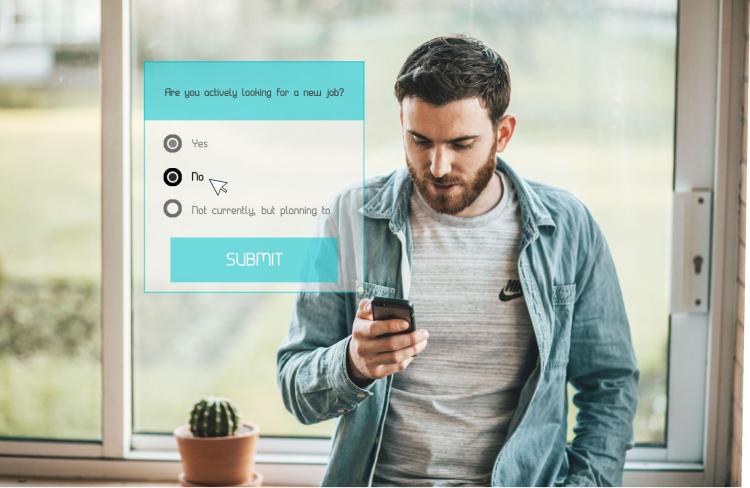

Think about an organization that wants to ask its employees if they are currently looking for a new position. Even if the poll is not in-person but rather shared online (emailed, for example), it still poses a privacy concern.

When looking at the most popular survey services for organizations, they actually offer features such as:

- Tracking users’ IP

- Live analytics (analysis of votes in real-time)

- Time spent on answering the survey

- Country

- City

- Operating system

Some of the services report straight-forward individual responses and even go as far as tracking individual votes across multiple surveys so the organization can easily cross-reference answers to different questionnaires made by the same individual.

The mere possibility of privacy violation is sometimes enough to make people vote untruthfully or not vote at all.

Imagine the company has one local team and one offshore; simply noticing when a vote was made may let the poll creator know the source team for this batch of votes. An even more sinister approach may be to intentionally distribute the poll to small batches of people, or even one-by-one, and wait for a response. Even if such an inference process is unlikely to occur, the mere possibility is sometimes enough to make people vote untruthfully or not vote at all (and given the aforementioned features in popular poll services – such analysis is probably more popular than we think).

Why should poll creators choose our DP-Poll?

Using our differentially private poll generator, participants can feel safe knowing their individual responses are protected and only high-level insights can be delivered once enough information has been collected. Informing the voters of choosing this technique is likely to increase both participation rates and – more importantly – truthful voting rates, not to mention bonus points for looking after voters’ privacy in the first place.

Why should participants feel safe when voting in our DP-Poll?

We implemented the “untrusted curator” model of Differential Privacy, where the entity collecting the data may not be trustworthy. This means the user doesn’t have to trust the poll curator to use his/her data responsibly. The DP-Poll guarantees that privacy is preserved end-to-end, so that even seeing a specific person’s incoming vote won’t allow an observer to gain any information about the voter.

How does it work?

In a nutshell, Local Differential Privacy (LDP) is when statistical noise is added to each individual data point – in this case, each participant’s response to the survey – to guarantee that no one can reliably infer the provided answer, regardless of what other information sources are available.

We implemented an LDP algorithm based on the paper Optimizing Locally Differentially Private Protocols. Specifically, the Symmetric Unary Encoding algorithm which improves Google’s RAPPOR algorithm.

Intuition:

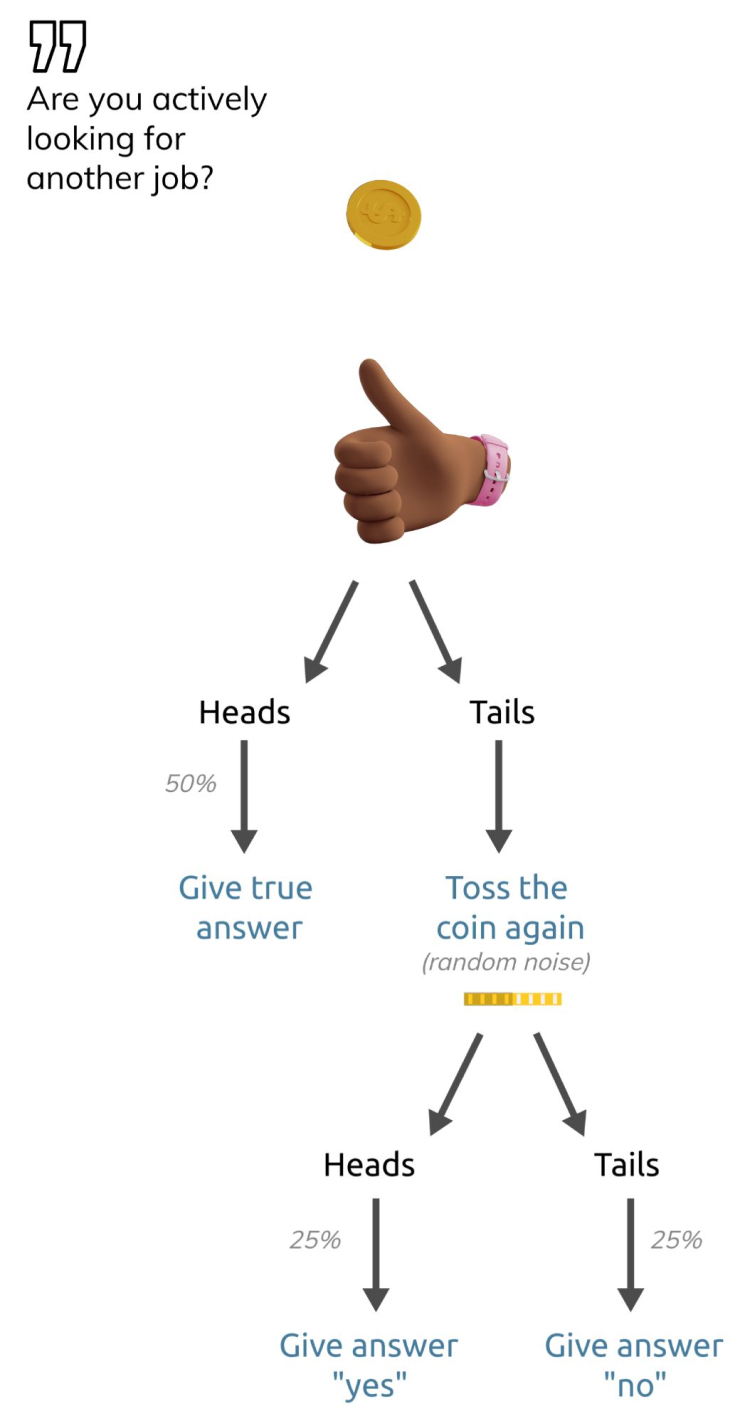

Let’s play a game: An employer asks you a sensitive question – for simplicity, let’s assume it’s a “yes or no” question (such as “are you actively looking for a new job?”) – and you answer according to the following flow chart:

Meaning, you flip a coin – if it comes up Heads: you answer truthfully. If it comes up Tails: you toss the coin a second time and answer “yes” if you get Heads and “No” otherwise. The number of coin tosses and the result of each toss remains confidential since you only provide the final answer at the end of the process. This means that if the employer looks at your answer alone, they cannot infer anything about you as an individual since even if you answered “Yes”, it might be because you’re actually looking for another job or it might simply be because you got “Tails” and then “Heads” and gave a default answer of “Yes”. But although the employer cannot learn anything from a single answer, when enough people participate – they can actually learn a lot about the overall responses and even decrease the statistical error by increasing the number of voters.

In practice, Local Differential Privacy introduces computational noise using more sophisticated algorithms than our simple example, but this gives good intuition regarding the power of noise in protecting the individual while providing reliable information about a population.

Instructions

- Create your survey here.

- You’ll get an email with a direct link to your survey and a unique id.

- If you want to embed your survey in a website just copy the following code snippet and paste in your survey id instead (wSurveyId). Otherwise, just directly share your survey URL.

<script type='text/javascript'> wWidth = "430px"; wHeight = "510px"; wSurveyId = "4d4a8bdc-fc5c-43db-b343-2534d01ebf91"; // your survey id goes here document.write('<div id="DPSurvey"></div>'); document.write('<scr'+'ipt type="text/JavaScript" src="https://www.pvml.com/static/assets/js/survey_widget/bootstrap.js"></scr'+'ipt>'); </script>